The landscape of computation is undergoing a profound transformation, with quantum computing emerging as a potential successor to the classical paradigm that has defined technological progress for decades. Within this rapidly evolving field, Google has consistently positioned itself at the forefront, investing resources and fostering talent dedicated to unraveling the complexities of quantum mechanics for practical applications. The recent advancements from Google’s Quantum AI team represent a significant milestone, marking the moment when the field appears to be truly crossing a critical threshold, transitioning from theoretical exploration towards tangible computational power. This article delves into the significance of this breakthrough, examining its implications for science, industry, and the future of problem-solving.

The Classical Computing Limit and the Quantum Promise

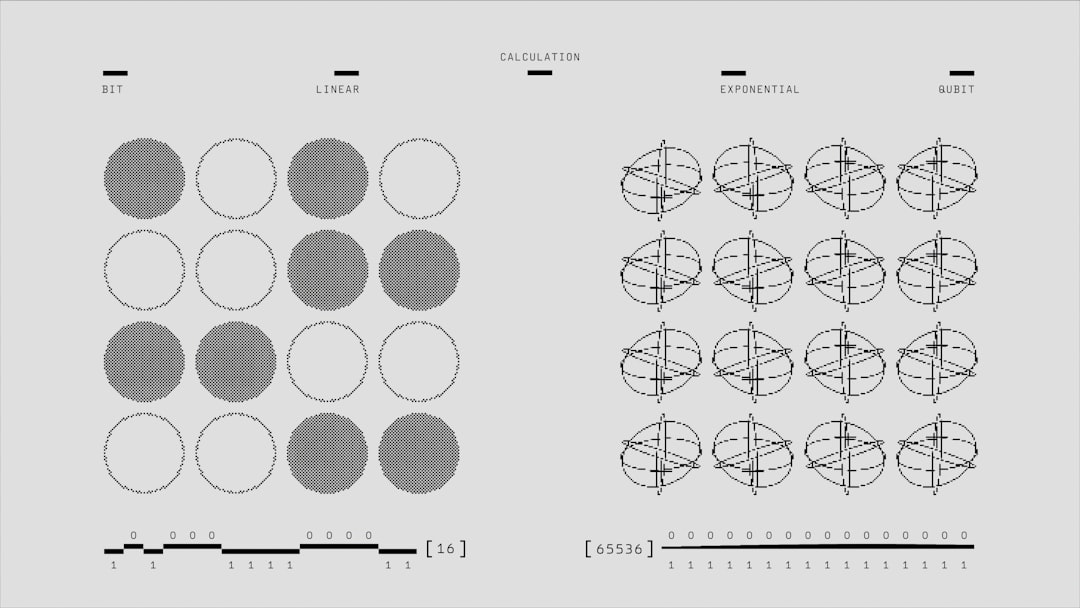

Classical computers, built upon the foundation of bits representing either a 0 or a 1, have achieved remarkable feats of processing power. However, they encounter fundamental limitations when tasked with simulating complex molecular interactions, optimizing intricate logistic networks, or breaking modern encryption algorithms. These problems exhibit an exponential growth in difficulty as their scale increases, quickly overwhelming even the most powerful supercomputers.

Quantum computing, on the other hand, leverages the peculiar principles of quantum mechanics to represent and process information in fundamentally different ways. Instead of bits, quantum computers utilize qubits. A qubit, unlike a classical bit, can exist not only as a 0 or a 1 but also in a superposition of both states simultaneously. This ability to represent multiple states at once allows quantum computers to explore a vast number of possibilities in parallel.

Beyond superposition, quantum entanglement is another cornerstone of quantum computation. Entanglement links the states of multiple qubits, meaning that the state of one qubit is intrinsically correlated with the state of others, regardless of the physical distance separating them. This interconnectedness enables complex correlations and computations that are impossible with classical systems.

The theoretical promise of quantum computing is immense. Experts envision applications ranging from the discovery of new materials with unprecedented properties and the development of highly targeted pharmaceuticals to revolutionizing artificial intelligence and enhancing cybersecurity. However, translating this theoretical potential into a functional quantum computer has been a formidable scientific and engineering challenge, demanding precise control over delicate quantum states.

The Need for Superior Computational Power: Beyond Classical Reach

- Molecular Simulation: Understanding the behavior of molecules at the atomic level is crucial for drug discovery, materials science, and chemistry. Classical computers struggle to accurately simulate even moderately sized molecules, limiting the pace of innovation.

- Optimization Problems: Many real-world problems, such as routing delivery trucks, managing financial portfolios, or optimizing airline schedules, involve finding the best solution among an astronomically large number of possibilities. Classical algorithms become intractable for these problems as they scale.

- Cryptography: The security of much of our digital infrastructure relies on the computational difficulty of factoring large numbers. Quantum computers, with algorithms like Shor’s algorithm, have the potential to break these encryption methods, necessitating the development of quantum-resistant cryptography.

In the realm of quantum computing, the recent breakthrough by Google Quantum AI has drawn significant attention, reminiscent of the foundational advancements made in the 1990s. This era marked critical developments in quantum algorithms, paving the way for the innovations we see today. For a deeper understanding of the historical context and its implications, you can explore a related article that discusses these early milestones in quantum technology. Check it out here: Freaky Science Article.

Google’s Journey Towards Quantum Supremacy

Google’s commitment to quantum computing dates back to the early days of serious research in the field. The company established its Quantum AI lab with a long-term vision, recognizing the transformative potential of this technology. Over the years, Google has pursued a hardware-centric approach, focusing on building and improving superconducting quantum processors, a leading architecture in the quantum computing landscape.

Their efforts have been characterized by a systematic progression, tackling one challenge after another. This involves not only the fabrication and control of qubits but also the development of sophisticated error correction mechanisms, a paramount concern in quantum computing due to the sensitivity of quantum states to environmental noise.

The concept of “quantum supremacy,” a term coined by physicist John Preskill, refers to the point where a quantum computer can perform a specific computational task that is practically impossible for even the most powerful classical supercomputers to complete within a reasonable timeframe. Achieving quantum supremacy is not about replacing classical computers entirely but about demonstrating a definitive performance advantage for a well-defined problem.

Google’s Sycamore processor was a landmark achievement in this pursuit. With its 54 superconducting qubits, Sycamore was designed to tackle a sampling problem that is extremely difficult for classical computers. The demonstration, published in Nature, showed that Sycamore could perform a complex calculation in approximately 200 seconds, a task that would have taken the world’s most powerful supercomputer thousands of years. This event, while subject to debate from different research groups, solidified the notion that quantum computers were beginning to outpace their classical counterparts for certain specialized tasks.

The Sycamore Experiment: A Glimpse of Quantum Advantage

- The Chosen Problem: Random circuit sampling was selected for the Sycamore demonstration. This involves executing a randomly generated quantum circuit and then sampling the output distribution of the qubits. Verifying the correctness of the output on a classical computer becomes exponentially harder as the number of qubits and circuit depth increase.

- The Hardware: Sycamore employed a 2D grid of superconducting qubits, each coupled to its nearest neighbors. This architecture allowed for complex interactions and the execution of algorithms requiring multi-qubit gates.

- The Outcome: The Sycamore processor reportedly completed the task in 200 seconds. Estimates for the classical computation ranged from 10,000 years (IBM’s initial counter-claim) to a more refined calculation by Google of 2.5 days. Regardless of the precise classical estimate, the achievement represented a significant computational speedup.

The Recent Breakthrough: Reaching the Threshold from the 1990s

The recent advancements from Google’s Quantum AI team are not merely an incremental improvement on their previous work; they represent a crucial inflection point, moving the field beyond the theoretical musings and early demonstrations that characterized quantum computing research in the 1990s and early 2000s. While early research laid the groundwork, focusing on fundamental principles and very small-scale systems, the recent progress suggests a maturation of the technology towards practical usability.

This latest breakthrough involves significant improvements in operational qubit count, coherence times, and computational fidelity. Coherence time refers to how long a qubit can maintain its quantum state before succumbing to environmental noise. Longer coherence times are essential for performing more complex computations that require multiple sequential operations. Computational fidelity refers to the accuracy with which quantum gates can be executed on qubits. High fidelity is critical for minimizing errors that can propagate and corrupt the final result of a quantum computation.

The scale of these improvements signifies a move from experimental curiosities towards the realm of fault-tolerant quantum computing. Fault tolerance in quantum computing is the ability to perform computations reliably even in the presence of noise and errors. This is a monumental challenge, as quantum systems are inherently fragile. The recent work by Google suggests they are making substantial progress in mitigating these errors, bringing the possibility of running error-corrected quantum algorithms within reach.

The Significance of Improved Qubit Quality and Control

- Increased Qubit Count: While raw qubit numbers are not the sole determinant of quantum power, a larger number of high-quality qubits is essential for tackling more complex problems. Google’s progress indicates an ability to consistently produce and control a greater number of these delicate quantum bits.

- Extended Coherence Times: The duration for which qubits remain in a usable quantum state has a direct impact on the complexity of algorithms that can be executed. Extended coherence times allow for more intricate quantum circuits to be programmed and run without significant degradation of information.

- Enhanced Gate Fidelity: The precision with which quantum operations (gates) are performed on qubits is paramount. Improvements in gate fidelity mean fewer errors are introduced during computation, leading to more reliable and trustworthy results.

Implications for Scientific Discovery and Industrial Innovation

The implications of Google’s quantum breakthrough are far-reaching, promising to accelerate progress in numerous scientific disciplines and drive innovation across various industries. The ability to perform computations that were previously impossible opens up new avenues for exploration and problem-solving.

In materials science, quantum computers could enable the precise simulation of novel materials with desired properties, such as superconductors that operate at room temperature or catalysts that are significantly more efficient. This could lead to breakthroughs in energy storage, transportation, and manufacturing.

The pharmaceutical industry stands to benefit immensely from the power of quantum computing. Simulating the interactions between drug candidates and biological targets with unprecedented accuracy could dramatically speed up the drug discovery and development process, leading to more effective and personalized medicines.

Beyond scientific applications, industries like finance and logistics could see significant transformations. Portfolio optimization, risk analysis, and supply chain management are all areas that involve complex optimization problems that quantum computers are well-suited to address.

However, it is crucial to acknowledge that the path from this breakthrough to widespread practical applications is still ongoing. Significant engineering and algorithmic challenges remain before quantum computers can be routinely deployed for complex industrial tasks.

Transforming Key Research Areas

- Drug Discovery and Development: Simulating molecular interactions to design new drugs and therapies with higher efficacy and fewer side effects.

- Materials Science: Discovering and designing new materials with tailored properties for applications in energy, electronics, and manufacturing.

- Climate Modeling: Developing more accurate and sophisticated climate models to better understand and predict climate change.

- Financial Modeling: Enhancing risk management, portfolio optimization, and fraud detection in the financial sector.

In the realm of quantum computing, Google’s recent breakthrough in achieving a quantum AI threshold has drawn significant attention, reminiscent of the foundational advancements made in the 1990s. This era marked a pivotal shift in our understanding of quantum mechanics and its applications in computing. For those interested in exploring the historical context and implications of these developments, a related article can be found at Freaky Science, which delves into the evolution of quantum technologies and their potential impact on the future of artificial intelligence.

The Road Ahead: Challenges and Future Prospects

While Google’s recent achievements represent a monumental step forward, the journey of quantum computing is far from over. Several significant challenges must be addressed to realize the full potential of this technology.

One of the most pressing challenges is the development of robust error correction mechanisms. As mentioned, quantum systems are highly susceptible to noise and decoherence, which can lead to errors in computation. Implementing effective quantum error correction protocols is critical for achieving fault-tolerant quantum computation, the ultimate goal for solving complex and practical problems.

Scalability remains another key hurdle. While Google has demonstrated impressive qubit counts, building quantum computers with millions of highly interconnected and controllable qubits is a formidable engineering feat. This will require advancements in fabrication techniques, cryogenic engineering, and control systems.

Furthermore, the development of quantum algorithms is an ongoing area of research. The unique nature of quantum computation necessitates the creation of new algorithms that can effectively leverage the power of quantum hardware. While some powerful algorithms like Shor’s and Grover’s exist, many more are needed to unlock the full spectrum of quantum applications.

The collaboration between hardware developers, algorithm researchers, and industry stakeholders will be crucial for navigating these challenges. Education and workforce development programs will also be essential to cultivate the expertise needed to design, build, and operate future quantum computers and to develop the applications that will drive their adoption.

Navigating the Complexities of Quantum Advancement

- Quantum Error Correction: Implementing sophisticated protocols to detect and correct errors that arise from environmental noise and imperfect quantum operations.

- Scalability of Quantum Hardware: Developing methods to reliably manufacture and control increasingly larger numbers of high-quality qubits.

- Algorithm Development: Creating new quantum algorithms that can efficiently solve a broader range of scientific and industrial problems.

- Software and Tooling: Building the necessary software infrastructure, programming languages, and development tools to make quantum computing accessible to a wider audience.

- Interdisciplinary Collaboration: Fostering strong partnerships between physicists, computer scientists, engineers, mathematicians, and domain experts from various industries.

FAQs

What is the Google Quantum AI Threshold Breakthrough 1990s article about?

The article discusses a breakthrough in quantum computing achieved by Google, which has surpassed the “quantum supremacy” threshold, a milestone that was first proposed in the 1990s.

What is quantum supremacy?

Quantum supremacy refers to the point at which a quantum computer can perform a calculation that is beyond the capabilities of the most powerful classical computer.

How did Google achieve this breakthrough in quantum computing?

Google’s research team used a 53-qubit quantum computer called Sycamore to perform a specific task that would take the world’s fastest supercomputer 10,000 years to complete. This demonstrated quantum supremacy.

What are the potential implications of this breakthrough in quantum computing?

This breakthrough has the potential to revolutionize fields such as cryptography, drug discovery, and materials science, as quantum computers can solve complex problems much faster than classical computers.

What is the significance of the 1990s in relation to this breakthrough?

The 1990s is when the concept of quantum supremacy was first proposed, and it has taken decades for technology to advance to the point where this milestone could be achieved.